What is a Convolutional Neural Network?

As a deep learning architecture specialized in the analysis of visual data, convolutional neural networks (CNN) provide high-performance solutions in critical areas such as object recognition, process automation, and transfer learning. By reading further, you can find answers to questions such as what is a convolutional neural network, how it works, what its differences from RNN are, and what benefits it offers.

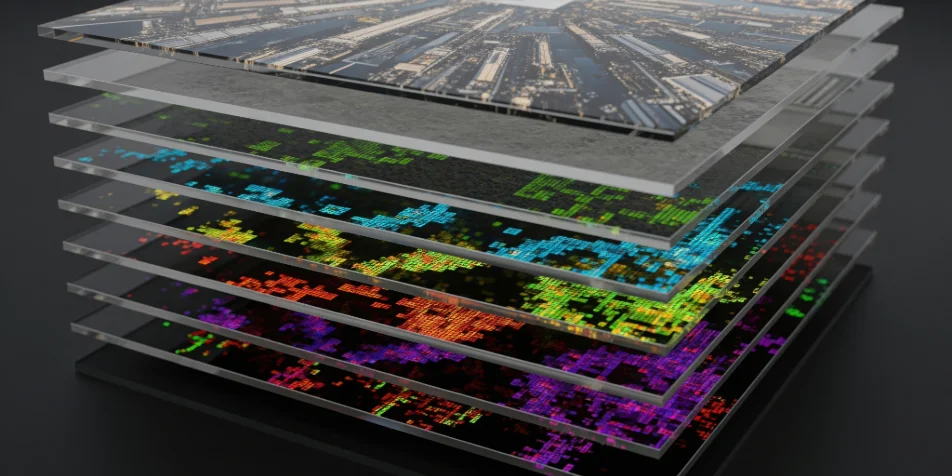

To answer the question what is a ConvNet in its simplest form: A Convolutional Neural Network (CNN) is a deep learning architecture specifically developed to analyze data containing images, videos, and visual patterns. Unlike traditional Fully Connected networks, this architecture does not process data as a holistic vector; instead, it processes it based on local correlations through feature maps. The core competency that distinguishes the CNN architecture is its ability to automatically filter low-level (edges, corners) and high-level (textures, object parts) features in the input data through hierarchical layers. In this way, it identifies a photo of a cat not just by looking at color values, but by first learning lines, then ears, eyes, and finally the concept of a "cat." The "Convolution" layer at the heart of the architecture performs mathematical feature extraction by sliding (stride) kernel filters of a certain size over the input matrix. Each filter focuses on capturing a specific feature. While the input layers capture low-level visual features, as the network deepens, feature maps transform into more complex and abstract semantic representations.

Another component frequently used in CNN structures is "pooling" layers. Pooling reduces the dimensionality by summarizing the feature maps obtained from the image. This process both reduces computational cost and makes the model more robust against small positional changes. In summary, Convolutional Neural Networks work similarly to the human visual system: they first perceive small details and then combine these details to make sense of the big picture. Thanks to these features, they have become the fundamental technology in many fields such as facial recognition, object detection, medical image analysis, and autonomous vehicle systems.

In addition to the answer provided to the question what is CNN, you can learn clues about how this system works in the following sections.

How Does a ConvNet Work?

The working logic of ConvNets is based on taking a raw input and transforming it into increasingly meaningful representation layers. During this process, the model does not know in advance what to look for; it learns the correct features itself during training. The workflow is as follows: at the input layer, raw image data is defined as a multi-dimensional matrix (tensor), with each element representing pixel intensity. At this stage, the image is merely a matrix for the model. Then, convolution layers come into play. Small filters used in these layers are applied by sliding over the image, and a feature map is created as a result of each application. Each feature map represents the existence of a specific pattern within the image. The outputs obtained from the convolution layer are usually passed through an activation function such as ReLU (Rectified Linear Unit) to give the model non-linear characteristics. In this way, the network gains the ability to model complex and non-linear data patterns with high accuracy. Thus, the system can distinguish not only simple patterns but also more complex visual relationships. In the next stage, pooling layers step in. Pooling preserves the most dominant information by reducing the size of the feature maps. With this method, the model's tolerance to spatial invariance is increased, while the computational load is optimized and the risk of overfitting is reduced. As this convolution and pooling cycle is repeated multiple times, the model starts from simple edges and lines and focuses on increasingly complex structures. In the deepest layers, high-level concepts such as shapes, objects, or scenes are learned.

After the feature extraction is completed, the obtained data is flattened and transmitted to the Fully Connected layers where the classification decision is made. These layers combine the learned features to ensure the final decision is reached. For example, it is determined at this point which class an image belongs to. In short, ConvNets work by taking data from raw pixels and transforming them into meaningful and distinctive representations. This structure makes it possible for the model to both learn with high accuracy and generalize on new data.

The working process of ConvNet briefly consists of the following steps:

- Image or visual data is transferred to the input layer.

- Convolution layers detect local patterns on the image.

- Activation functions provide the model with non-linear learning capability.

- Pooling layers reduce the size of feature maps and preserve the most important information.

- These layers are repeated to learn more complex visual features.

- Learned features are transferred to fully connected layers.

- Final classification or prediction is made at the output layer.

Differences Between CNN and RNN

You can examine the fundamental differences between Convolutional Neural Networks (CNN) and Recurrent Neural Networks (RNN) in the comparison table below. Here are the differences between CNN and RNN!

| Feature | CNN (Convolutional Neural Network) | RNN (Recurrent Neural Network) |

|---|---|---|

| Primary Purpose | To detect patterns and features in visual and spatial data | To learn relationships in sequential and time-dependent data |

| Data Type | Images, video frames, visual matrices | Text, audio, time series |

| Working Logic | Analyzes data through filters by dividing it into local regions | Proceeds by remembering information from previous steps |

| Dependency Structure | Focuses on spatial dependencies | Focuses on temporal dependencies |

| Memory Mechanism | Does not directly store past information | Can hold previous inputs in its memory |

| Parallel Processing | Has high parallel processing capacity | Parallel processing is limited due to the sequential structure |

| Common Use Cases | Object recognition, facial recognition, image classification | Language modeling, speech recognition, machine translation |

Additionally, our article titled What is Machine Learning? Its Integration with Cloud Technologies may also be of interest to you.