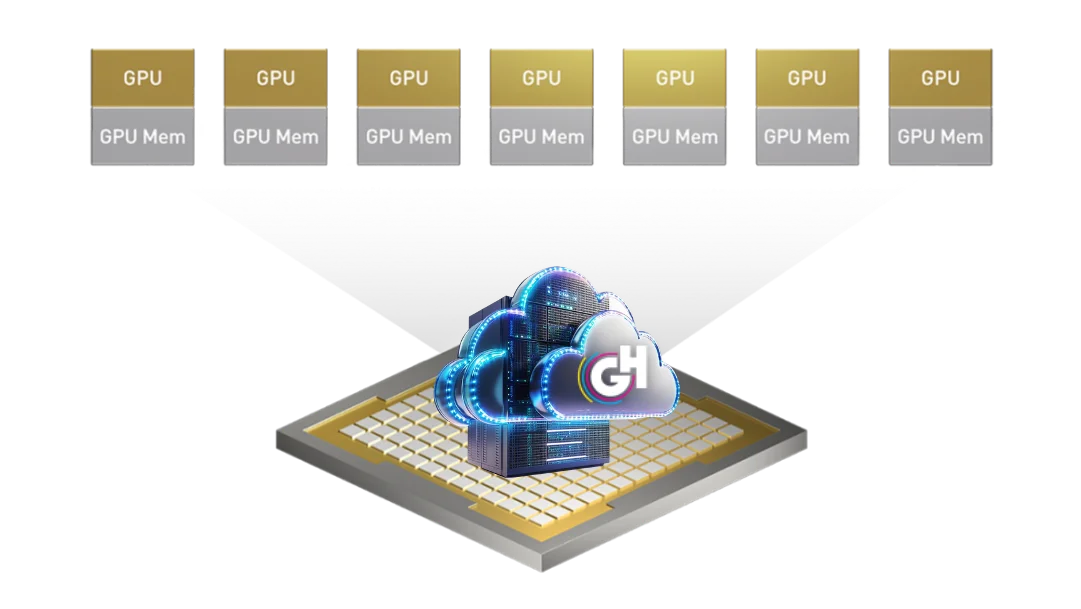

GPU as a Service - H Series

GPU infrastructure hosted in Turkey, delivered from a highly available and KVKK-compliant cloud platform, purpose-built for your AI and high-performance computing workloads.

Contact us now!Cloud-based GPU resources hosted entirely within Turkey and fully compliant with local regulations.

As GlassHouse Cloud, we deliver a secure, scalable, and truly differentiated technology experience through our GPU as a Service (GPUaaS) platform—hosted entirely in Turkey, fully compliant with KVKK and all sector-specific regulations, and backed by a high-availability architecture.

We reinforce data security with geographically redundant infrastructure and 24/7 monitoring, ensuring full compliance with all KVKK requirements. With GlassHouse Cloud GPU as a Service, you can confidently run AI workloads, large language models (LLMs), computer vision, video rendering, design and product development pipelines, scientific computing, and machine learning operations on NVIDIA’s latest GPU architecture.

With the GlassHouse Cloud platform and isolated GPU partitioning (MIG), we maximize both performance and security across your operational workflows.

Leave infrastructure management, system maintenance, version updates, and capacity planning to us—focus your time and resources on innovation with GlassHouse Cloud GPU as a Service!

Complete the form to get in touch with us! Let's build the infrastructure of success for your IT operations together.

Discover the features of our GPU as a Service - H Series.

Unveil the advantages of GlassHouse GPU as a Service - H Series!

NVIDIA GPUs deliver significantly higher speed and performance compared to traditional CPUs, executing complex AI/ML, LLM, Computer Vision, and NLP workloads in seconds through their parallel processing architecture. With MIG (Multi-Instance GPU) technology, GPUs can be segmented into isolated resource units, providing optimized performance for each workload. Thanks to automatic scaling, capacity can be increased or decreased instantly based on project demands—ensuring maximum efficiency across workloads.

Instead of investing in your own GPU hardware, you can avoid high CAPEX costs as well as ongoing maintenance and management expenses by leveraging cloud-based GPU resources. GlassHouse handles all specialized operational processes—including hardware management, updates, cooling, energy optimization, and capacity planning—while your IT teams focus on strategic initiatives and innovation. With a pay-as-you-go model, you can maximize budget efficiency.

Operate seamlessly with a geographically redundant, 24/7 accessible infrastructure. Cloud-based GPU resources ensure continuous uptime through automated failover and high-availability architectures, safeguarding the resilience of your systems.

GlassHouse GPUaaS is hosted in data centers within Turkey, fully meeting the requirements of regulation-heavy industries—such as finance and public sector—especially KVKK. Your sensitive and personal data is processed and protected in full compliance, backed by local operational assurance.

Access GPU resources remotely with ease through convenient interfaces such as SSH, APIs, and the cloud console. Achieve rapid workflow adaptation, agility in development processes, and seamless integration across applications.

Blog content that may be of interest to you

Frequently Asked Questions

A GPU (Graphics Processing Unit) is a specialized processor designed to execute graphic and complex computational tasks through parallel processing. In Artificial Intelligence (AI) and Machine Learning (ML) projects, GPUs deliver significantly higher speed and performance compared to CPUs. Specifically, for Large Language Models (LLM), they accelerate projects by executing massive mathematical functions—such as matrix multiplications—in seconds through their parallel processing capabilities.

GlassHouse GPU as a Service (GPUaaS) is a high-availability, KVKK-compliant cloud GPU infrastructure hosted entirely within data centers in Turkey. It provides enterprises with access to the latest NVIDIA H100 architecture via a OPEX model, eliminating the need for heavy initial hardware investment (CAPEX).

The NVIDIA H100 is built on the next-generation Hopper architecture and delivers 2x to 7x faster performance compared to the previous-generation A100. With its 80 GB HBM3 (High Bandwidth Memory) capacity, it prevents bottlenecks by processing massive datasets at terabytes-per-second speeds, providing unparalleled power for LLM training and inference.

MIG (Multi-Instance GPU) is a technology that allows a single physical NVIDIA H100 GPU to be partitioned into 7 independent instances isolated at the hardware level. Unlike time-shared vGPU solutions, MIG assigns fully dedicated and isolated resources to each workload, eliminating the "noisy neighbor" problem and offering guaranteed performance.

VRAM (Video RAM) is the GPU’s fast, dedicated memory used to store data for instantaneous parallel calculations. The larger the LLM (in terms of parameter count), the more VRAM it requires. If VRAM is insufficient, the model either fails to run or reverts to the much slower system RAM, resulting in significant performance loss.

Model Training: Refers to the "post-production" process of training a model for weeks using billions of data points, requiring the highest computational power.

Inference: The real-time generation of instantaneous responses from trained models (e.g., ChatGPT-like chatbots), where low latency is critical. GlassHouse GPUaaS offers a scalable, high-performance infrastructure for both workload types.

Yes. The entire GlassHouse GPU as a Service infrastructure is hosted in Tier III data centers located within Turkey.

The Managed GPU service provided by Glasshouse goes beyond providing simple hardware access, offering end-to-end support that encompasses all operational and technical layers of the GPU ecosystem. According to the service framework, the core technical details are as follows:

GPU Ecosystem and Component Management

The most critical part of the managed service is the management of the software layers required for the GPU to operate efficiently.

Software Stack Installation and Patching: Installation, management, and updating of core GPU ecosystem components such as CUDA, NVIDIA Container, and Docker are carried out by Glasshouse experts.

Periodic Updates (Patch Management): Patches for the Operating System, GPU hardware, and driver requirements are applied regularly in 3-month cycles in coordination with the customer.

NVIDIA Compatibility: OS updates supported by NVIDIA or the GPU manufacturer are continuously monitored and integrated into the system.

Operating System (OS) and Infrastructure Management

The Managed GPU service also covers the operating system layer running on the hardware (Managed OS).

Installation and Configuration: This includes the installation of the OS, performing baseline configurations, and installing specialized drivers according to customer requirements.

File System and Disk Management: Directory management, intervention in file system failures, and monitoring of disk utilization rates are provided.

Access and Security: Access controls on the server, fulfillment of requests regarding security vulnerabilities, and meticulous tracking of system logs are maintained.

24/7 Monitoring and Operational Intervention

The service is supported by advanced monitoring mechanisms to guarantee business continuity.

Advanced Monitoring: Beyond standard infrastructure, specific metrics such as GPU VRAM utilization and Power State are monitored 24/7.

Incident Management: In case of access disruptions or server/network issues, immediate intervention is provided in accordance with defined Service Level Agreements (SLAs).

Service Ownership: A "service ownership matrix" is created by identifying the services running on the server to ensure all operational gaps are closed.

Strategic Advantages

This technical scope provides enterprises with the fundamental benefit of "focusing on innovation by eliminating operational burden." Instead of dealing with CUDA version incompatibilities or driver errors in complex LLM (Large Language Models) and AI projects, organizations can accelerate their initiatives by leveraging Glasshouse's ITIL-standard management model.

A GPU alone is not a complete solution; the data feeding layers (CPU, RAM, storage, and network) must be powerful enough to support it. If the dataset originates from slow storage, the H100—capable of processing terabytes per second—will remain "idle," extending project timelines. GlassHouse provides high-performance NVMe SSD storage and 100 Gbps network infrastructure to prevent these bottlenecks.

In LLMs, the total model size and the number of active parameters used in a specific calculation can differ. Architectures like MoE allow for more optimized VRAM usage, enabling massive models (e.g., those occupying 800 GB of disk space) to run with appropriate VRAM configurations.

RAG structures working on corporate document sets or Agentic AI systems providing intelligent responses can produce high-accuracy results with low latency without requiring massive VRAM capacities. These methods reduce costs by utilizing GPU parallel processing power most efficiently.

Purchasing GPUs requires high CAPEX (Capital Expenditure). With GlassHouse’s model, enterprises manage these costs as OPEX (Operational Expenditure). This eliminates hidden costs such as hardware depreciation, energy, cooling, and maintenance, allowing budgets to be redirected toward innovation.

No. Thanks to the 4th Gen NVLink technology in the NVIDIA H100, up to 8 GPUs can be interconnected with a massive bandwidth of 900 GB/s. This "Distributed Model Training" capability ensures linear scaling without performance loss during LLM training with large datasets.

Our service is designed for all sectors requiring high-volume Natural Language Processing (NLP), large-scale Computer Vision, Deep Learning, and Big Data Analytics. It is widely used in industries where data security is critical, such as banking, payment systems, and healthcare.

GlassHouse infrastructure is geographically redundant and monitored 24/7. In the event of a hardware failure, workloads are automatically transferred to healthy GPU resources through N+1 redundancy structures or Kubernetes orchestration, minimizing operational downtime.

Yes. GlassHouse GPUaaS infrastructure is hosted entirely in data centers within Turkey. This physical locality fully meets the "data residency" and "isolated hosting" requirements of regulators such as BDDK (Banking Regulation and Supervision Agency) and SPK (Capital Markets Board).

A powerful GPU will remain "idle" and wait if supported by slow storage. To prevent such bottlenecks, GlassHouse supports its GPU units with high-performance NVMe SSD areas and 100 Gbps low-latency network switches, ensuring that data feeding speeds are synchronized with the GPU's processing power.